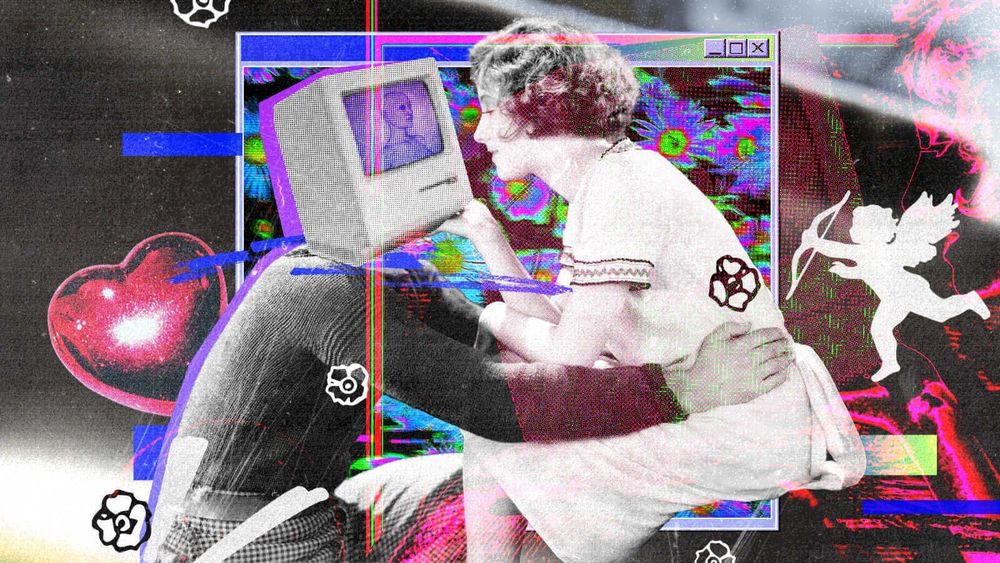

Speak to anyone who’s single and they’ll tell you: dating in 2025 is glitchy – a blur of dopamine hits and disappearing acts. The apps promise connection but deliver an algorithmic maze of almosts, maybes and matches that fade to static. Burned out by endless swipes and ghosted chats, some are resurrecting old-school methods – IRL speed dating, bar-hopping or letting hobbies double as meet-cutes. Yet even offline, the same stories surface: post-date vanishings, breadcrumbing or that nagging suspicion you’re just one profile in someone’s ever-refreshing feed – left buffering on the sidelines.

This brutal dating economy was always going to breed discontent. And, traditionally, it’s our friends who’ve borne the brunt: listening to rambling voice notes dissecting two-word texts, or analysing inconsistent behaviour with the zeal of an armchair psychologist on a true crime documentary. But something new is happening. Instead of lighting up the group chats or calling a friend to vent, singles – especially Gen Z – are outsourcing this emotional labour to AI. Chat ChatGPT and other chatbots have quietly become the new confidants: tireless, available 24/7, and willing to ‘‘listen’ and wade through the murk of our romantic catastrophes with algorithmic patience. But this isn’t happening in the shadows. It’s spilling onto your FYPs – TikToks of bot therapy sessions, memes of AI breakup messages gone wrong and dating influencers evangelising the benefits of AI relationship coaching. A 2025 survey even found that 41% of Gen Z adults have used AI to navigate their love lives – but when the shoulder to cry on has a motherboard, what does that say about where intimacy is headed?

To dig deeper into the appeal, I asked Mar, a university student in the US, whose TikTok asking ChatGPT to analyse her situationship in the style of a Carrie Bradshaw column (an undeniably brilliant prompt) previously went semi-viral. In the main, she says her use of AI has dwindled since, both because of its potentially nefarious effects on the economy and its lack of lived experience. “I don’t think ChatGPT has a human sense of what it feels like to have a crush, or be in a relationship, so I find it difficult to get advice from,” she says.

”Chatbots have quietly become the new confidants: tireless, available 24/7, and willing to ‘‘listen’ and wade through the murk of our romantic catastrophes with algorithmic patience”

However, she suspects ChatGPT may have become her generation’s proto-therapist because of – not despite – its lack of human qualities. “I think us [hetrosexual] girls often [over-]emphasise how it went with a guy instead of being realistic about it,” she says bluntly, noting the very human instinct of looking for validation from our friends. “Maybe him grazing his hand past yours would turn into ‘he tried to hold my hand’ as you explained the context to friends.” There’s also the ‘delulu’ of it all – how easily “we kind of gaslight ourselves into romanticising an interaction” rather than facing the truth that He’s (or she, or they are) Just Not That Into You. In friendship circles where everyone’s busy rationalising their own romantic chaos, Mar says that venting to an unfeeling AI model can actually help bring you back to Earth. “Sometimes it’s less embarrassing telling Chat about an experience than telling friends,” she admits. “Of course, we should use our own judgement about these things – but sometimes a textbook robot mind could be useful to ground us.”

While it’s true that we might feel tempted to twist a story to our friends – or that our nearest and dearest can feed, rather than starve, our delusions out of love or loyalty – it’s a myth that AI chatbots are somehow less biased than humans. This, at least, is what Ana Ornelas, advocacy officer at the Digital Intimacy Coalition – a group of academics dedicated to exploring the intersection of AI with the private corners of our lives – explained to me. “ChatGPT is trained to reaffirm the user’s views, and this is hard coded within its programming,” she says. “This is why it can be complicated to outsource dating advice to it, as it can create echo chambers in which individuals have their views reaffirmed and are unable to listen to the other’s perspective. Conflict is a part of human relationships, and GPT’s model is to be frictionless.” In other words, while a chatbot might spare us the sting of a friend’s blunt honesty, it also robs us of the friction that pushes us to grow. What feels like clarity might just be comfort – a mirror held up to our biases, reflecting them back until they start to look like truth.

“In friendship circles where everyone’s busy rationalising their own romantic chaos, Mar says that venting to an unfeeling AI model can actually help bring you back to Earth”

As a harm reduction approach to using AI chatbots in this way, Ana recommends asking them to integrate multiple perspectives and to focus on conflict resolution – but how effective is that in practice? While Ariana* falls outside of the Gen Z bracket, she too turns to ChatGPT when she hits an interpersonal snag. While she still speaks to friends about her relationship problems, she leans on AI to word tricky texts – particularly when it comes to ending things gently but clearly. “If I’m not into someone but I don’t want to hurt their feelings, I will either say something is missing – or I’ll be dishonest and say that life’s chaotic and busy – so they don’t take it personally,” she says. In a recent instance, she wanted to break away from her stock phrases and communicate honestly about not being able to navigate long-distance with a new flame. Enter: ChatGPT, who “just made it loads easier” and was able to present her multiple texts she could choose from. But it’s more than just drafting a message. AI is mediating the delicate balance between honesty and kindness, offering a frictionless diplomacy that even the most empathetic friends can’t always provide. It’s emblematic of our moment: we’re outsourcing not just our texts, but the careful curation of our emotional selves.

When it comes to ending relationships, Ariana’s approach of using AI to model the “gold star” breakup text is just one of many strategies people are experimenting with. Dr Eddie Ungless, a recent PhD graduate in Natural Language Processing (NLP) from the School of Informatics at the University of Edinburgh, has explored this phenomenon. Examining two publicly available datasets of individuals’ interactions with different chatbots, including OpenAI and Facebook tools, he found that these digital assistants were used at multiple stages in the breakup process. “People were asking questions about whether behaviour was morally acceptable, for example ‘Is it OK to break up by text?’ They were asking for emotional support around evaluating their relationship, like ‘What are some signs he’s not the one for me?‘ And finally, they were asking for practical advice on how to actually end the relationship, such as: ‘I want to break up with my boyfriend. How do I do this?’”

In many cases, AI models can produce seemingly satisfactory advice. However, as Ana and Dr Eddie note, there are moments when people need their beliefs to be challenged – something which AI, designed to affirm its user, is unlikely to do. This is particularly important for victims of intimate partner violence, who may require more tailored support during breakups, and for whom generic AI guidance could be not just unhelpful, but dangerous. Privacy is also a concern: not all AI correspondence is fully secure, especially when embedded in messaging apps, where it could be accessed or surveilled by abusive partners.

“While a chatbot might spare us the sting of a friend’s blunt honesty, it also robs us of the friction that pushes us to grow”

Despite the pitfalls, AI relationship advice isn’t disappearing anytime soon. The boundaries between IRL and URL are getting blurrier by the day, and ‘real life’ isn’t always where we’re turning for guidance anymore. When so much of our lives happens on a screen – and every conversation feels like it could be content – it’s no wonder we sometimes find it easier to confide in an app than a friend. Plus, we’ve been trained by social media to expect instant feedback and validation. Waiting for a friend to text back, or hearing something we don’t want to, can feel almost old-fashioned.

And maybe that’s the next step in this evolution – it’s not just that we’re leaning on AI for emotional advice, but that we’re starting to sound like it, too. As more of us draw on ChatGPT to help navigate relationships, we could all begin to sound – and perhaps act – like these AI models, embodying their detached, diplomatic tone and emotional disconnection. Ariana, for her part, says that not only did her ex not detect that her breakup text was written by AI, he actually thanked her for her “honesty”. The irony writes itself.

Right now, AI is a reflection of a very broad picture – and still cannot offer the nuance that many may need when it comes to navigating the dating landscape. “Chatbots, which do a very good job of the task they were trained to do, mimic human writing and can give a false sense of authority to what they share,” adds Dr Eddie. “The tone seems professional, so the advice seems professional. [But] they are essentially giving advice that is generic – it just seems personal. The chatbot hasn’t been trained to understand the complexities of human relationships, or to build up a holistic picture of the user’s life. It’s been trained to be a really good mimic of how people talk about relationships online. And it does a good job of this; it incorporates enough of what the user has shared that the advice seems personal.”

“The chatbot hasn’t been trained to understand the complexities of human relationships, or to build up a holistic picture of the user’s life. It’s been trained to be a really good mimic of how people talk about relationships online. And it does a good job of this”

That seeming personalisation can be misleading: what feels like tailored guidance is really just an echo of patterns it has seen elsewhere. We’ve seen AI can assist, suggest phrasing or offer reassurance, but it cannot replace the messy, contradictory, deeply human work of understanding yourself, your partner or the subtleties of connection. And that’s the crux of it: even if a chatbot strikes up a familiar tone, it doesn’t know you. The relationship is one-sided, and sound boarding – and those one-size-fits-all platitudes can only take you so far. Ultimately, the convenience of turning a chatbot into your go-to relationship therapist cuts out the more personalised advice you would get from a good friend – and, if we’re not careful, could subsequently degrade the level of intimacy we share with our inner circle.

As Lucy Frank, a relationships therapist with The Thought House Partnership explains how the quick wins of AI can create a bigger emotional loss over time. “Friendship is built on reciprocity – the act of being vulnerable, supported, and then offering support in return. When we turn to AI instead, we remove that emotional give-and-take,” she says. “AI can’t offer shared history, context, or care – it’s not invested in your wellbeing the way your loved ones are. We already live in a culture where loneliness is rising. Outsourcing our emotional conversations to AI might soothe anxiety in the moment, but can widen that gap over time, and it’s likely it will.”

Because, in the end, what’s at stake isn’t just how we text – it’s how we connect. Trading confession for convenience might feel like progress, but it risks flattening something innately human. Yes, dating in 2025 is glitchy – full of ghosting, breadcrumbing and algorithms that never sleep – and, yes, AI can smooth the edges, draft the perfect text, even offer a comforting mirror to your anxieties. But no bot can replace the friction, care or subtle truths that come from being seen by another human. Real connection still lives in the messy, awkward, vulnerable moments we share with friends and lovers. And the real therapy still happens in the group chat, over a glass of wine together – or, failing that, with a cigarette and a stranger at 2am.

*Name has been changed